Even better, it is only accessed in one direction - you never execute code backwards. Except for branches (conditional and unconditional), the stretch of memory occupied by an instruction stream is entirely linear. What kind of memory is accessed sequentially? INSTRUCTION MEMORY. What's interesting about this is that if we want to cache memory that is going to be accessed linearly (typically), a direct mapped cache is a pretty good choice, because any block fetched is likely to be fully used (i.e., all of the words in the block are going to be used) and we can optimize the design to load more than one block at once (like two, or three) into sequential cache memory blocks. Assume a direct mapped cache of size 5 (in blocks). Observation: a direct mapped cache that uses mod to determine the destination block will be mapped _linearly_ into memory. The mapping is arbitrary but for the context of H&P assume that the mapping method is a modulo operation ( the cache block into which a main memory block with address a is given by A % M). Otherwise the block is updated in memory or read into the cache, written, and re-written to memory (see write+allocate and write+noallocate below) (if the same block of memory is written repeatedly a write-back cache can save a lot of memory stalls).Ī direct mapped cache of size M blocks maps memory addresses directly into cache blocks. Write back: on a write to memory, if the address being written is mapped by a cache block, it is updated and marked as dirty. Tf there is a block in the cache for that address, it is updated also. Write through: on a write to memory, memory is written directly (or the write goes into a write buffer, discussed below, but either way the write is issued immediately). "dirty" block: a cache block that has been written to but which has not been copied back to main memory is "dirty." It must be copied back to main memory when that block is discarded. Thrashing can reduce a cache to the same performance (or worse) than a system with no cache at all. Thrash: load and unload a cache block repeatedly - cache loads, then some other data is accessed and it's flushed and loads the new data, and then flushed and the original is loaded, and so on. Tag: some set of bits attached to a block that define it's characteristics (i.e., the address it is currently mapped to, whether it is "dirty" or not, etc.) Source block: the block in main memory to be copied into the cache or the block in the cache being written back to main memoryĭiscard / discarded : a discard is a block that has been flushed or removed from the cache and replaced with a block newly read from memory. For small moves (accessing one byte in a block, or writing one byte in a block) copying the whole block wastes some memory bandwidth but this is necessary concession to design practicality.ĭestination block: the block in the cache or in memory to be written to (e.g., when loading, the destination block is in the cache, when writing a changed cache block to memory, the destination block is main memory). A block is the smallest unit that may be copied to or from memory. (5) Caches are loaded in terms of blocks. Blocks contain some number of words, depending on their size. Caches usually contain a power of 2 # of blocks but this isn't a requirement. Except as noted, the size of all caches is an integer multiple of the block size. (4) All caches are broken into blocks of fixed size. (This is relevant to comparisons in H&P of the 200MHz 21064 Alpha AXP to the IBM 70MHz POWER2. It isn't just a matter of cranking the clockspeed up. (3) Measures such as "work per cycle" or "instructions per clock" are meaningless metrics for comparing different architectures. There's a pretty damn good reason, since modern CPUs almost exclusively use set-associative and direct mapped caches.

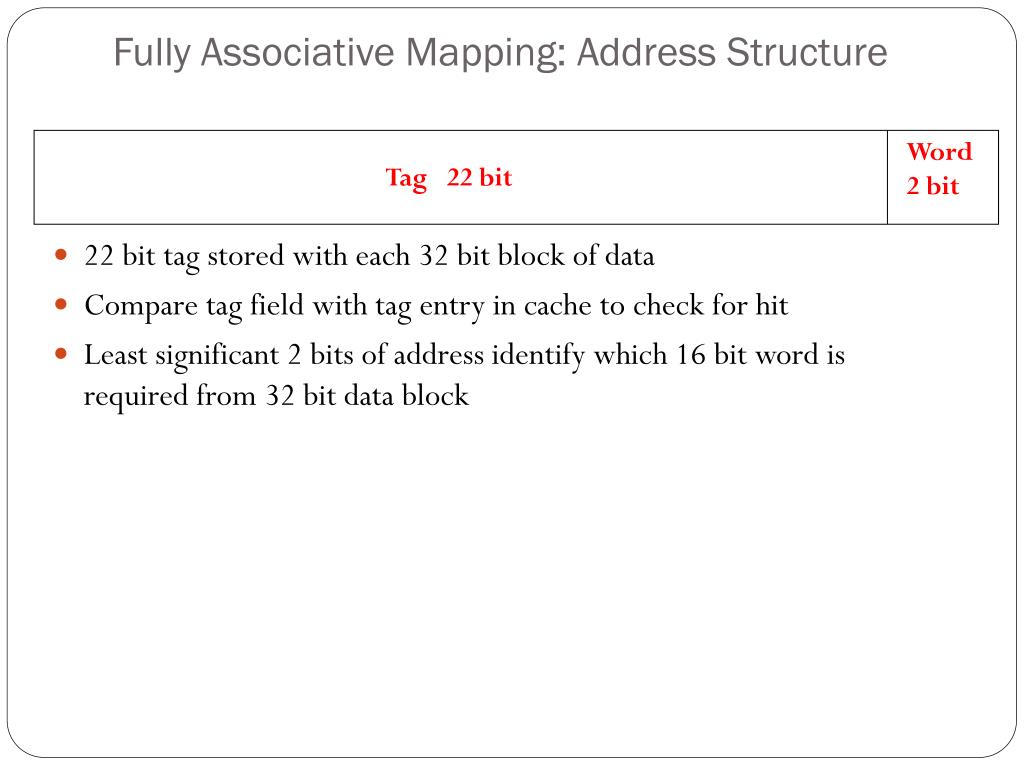

For instance, one might ask why direct and set-associative caches (see below) would even need to exist when fully associative caches are so much more flexible.

If you could, somebody would already have done it and you wouldn't be debating it. (2) You usually can't improve anything in computers without giving up a little of something else. This is accomplished by copying the contents into the faster cache. Caches work by mapping blocks of slow main RAM into fast cache RAM. Square bitmaps are stored one scanline after another, rectangular arrays of numbers are stored in memory as a series of linearly appended rows, and so on. All memory is addressed, stored, accessed, written to, copied, moved, and so on in a linear way.

What's different about a fully associative cache?

What are some key points I need to understand this section?ĥ.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed